kubernetes-kubeadm

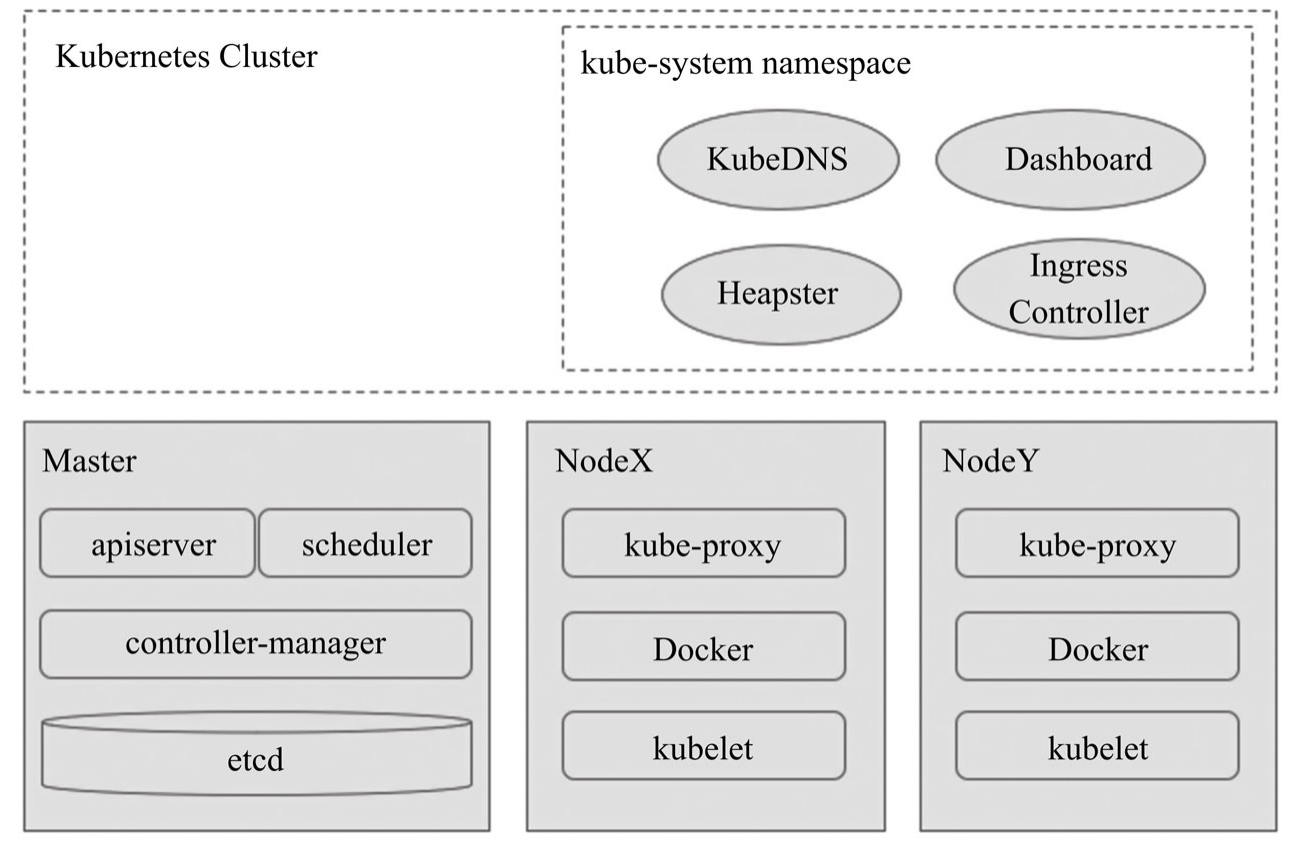

概览

机器准备

阿里云,centos7.8,dpk1 2C8G,dpk2 dpk3 dpk4,4C16GB

dpk1 192.168.1.69

dpk2 192.168.1.70

dpk3 192.168.1.72

dpk4 192.168.1.71

部署docker

首先在所有机器上安装Docker,参考 https://kubernetes.io/docs/setup/production-environment/container-runtimes/#docker

OR http://as4k.top/containerization/xdocker

部署步骤

############################################ 1. 配置yum源 CentOS / RHEL / Fedora ########################################

cat << 'EOF' > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

EOF

############################################ 2. 安装kubectl kubeadm kubelet ########################################

yum install kubeadm-1.16.2-0.x86_64 kubectl-1.16.2-0.x86_64 kubelet-1.16.2-0.x86_64

==========================================================================================================

Package Arch Version Repository Size

==========================================================================================================

Installing:

kubeadm x86_64 1.16.2-0 kubernetes 9.5 M

kubectl x86_64 1.16.2-0 kubernetes 10 M

kubelet x86_64 1.16.2-0 kubernetes 22 M

Installing for dependencies:

conntrack-tools x86_64 1.4.4-7.el7 base 187 k

cri-tools x86_64 1.13.0-0 kubernetes 5.1 M

kubernetes-cni x86_64 0.7.5-0 kubernetes 10 M

libnetfilter_cthelper x86_64 1.0.0-11.el7 base 18 k

libnetfilter_cttimeout x86_64 1.0.0-7.el7 base 18 k

libnetfilter_queue x86_64 1.0.2-2.el7_2 base 23 k

socat x86_64 1.7.3.2-2.el7 base 290 k

==========================================================================================================

############################################ 3. 启动和开机自启kubelet ############################################

systemctl enable kubelet && systemctl start kubelet

############################################ 4. 添加内核优化参数 ################################################

cat << 'EOF' > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

modprobe br_netfilter

lsmod | grep br_netfilter

############################################ 5. 初始化kubernetes master节点 (set up the Kubernetes control plane) ############

cat << 'EOF' > kubeadm-init.sh

localip=`hostname -I | awk '{print $1}'`

kubeadm init --apiserver-advertise-address $localip \

--apiserver-bind-port 6443 \

--control-plane-endpoint $localip \

--pod-network-cidr 10.88.1.0/24 \

--service-cidr 10.96.0.0/12 \

--image-repository registry.cn-hangzhou.aliyuncs.com/google_containers \

--kubernetes-version v1.16.2 \

--v=5

EOF

这个脚本执行完毕之后会有一段输出,需要记录下来备用,类似如下:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 192.168.1.69:6443 --token 82mejo.u6ywdbsqzty8j2gx \

--discovery-token-ca-cert-hash sha256:4006c47299eda847a248cf3702dc38211be9ae13054fcf428f17de1b26633fa0 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.1.69:6443 --token 82mejo.u6ywdbsqzty8j2gx \

--discovery-token-ca-cert-hash sha256:4006c47299eda847a248cf3702dc38211be9ae13054fcf428f17de1b26633fa0

############################################ 6. master节点kubectl访问 ##############################################

按照第5个步骤输出的提示操作 配置完成之后可以使用 kubectl version 检查

############################################## 7. 安装网络组件 #################################################

curl -sSL -o weave.yaml https://cloud.weave.works/k8s/net?k8s-version=$(kubectl version | base64 | tr -d '\n')

kubectl apply -f "https://cloud.weave.works/k8s/net?k8s-version=$(kubectl version | base64 | tr -d '\n')"

############################################ 8. 安装kubernetes worker节点 ############################################

在worker机器上,执行上述1,2,3,4

之后按照第5步脚本输出内容提示,加入集群,成为worker节点

############################################ 9. 让master也成为工作节点(该步骤可选) ############################################

[root@k8s001 ~]# kubectl taint nodes --all node-role.kubernetes.io/master-

node/k8s001 untainted

############################################ 10. 检查部署状态 ############################################

[root@dpk1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

dpk1 Ready master 16m v1.16.2

dpk2 Ready <none> 117s v1.16.2

dpk3 Ready <none> 114s v1.16.2

dpk4 Ready <none> 112s v1.16.2

[root@dpk1 ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-67c766df46-2ltns 1/1 Running 0 35m

kube-system coredns-67c766df46-whcqh 1/1 Running 0 35m

kube-system etcd-dpk1 1/1 Running 0 34m

kube-system kube-apiserver-dpk1 1/1 Running 0 34m

kube-system kube-controller-manager-dpk1 1/1 Running 0 34m

kube-system kube-proxy-kcdzw 1/1 Running 0 35m

kube-system kube-proxy-qpdsx 1/1 Running 0 20m

kube-system kube-proxy-tnrj9 1/1 Running 0 20m

kube-system kube-proxy-v2hjp 1/1 Running 0 20m

kube-system kube-scheduler-dpk1 1/1 Running 0 34m

kube-system weave-net-f4h7z 2/2 Running 1 20m

kube-system weave-net-kvcwt 2/2 Running 0 20m

kube-system weave-net-ls7cn 2/2 Running 0 20m

kube-system weave-net-rps7q 2/2 Running 0 24m

kubeadm config view

[root@dpk1 kubernetes]# kubectl cluster-info

Kubernetes master is running at https://192.168.1.69:6443

KubeDNS is running at https://192.168.1.69:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

使用私有镜像仓库创建应用

kubectl create secret docker-registry regcred --docker-server=registry.as4k.com --docker-username=as4k --docker-password=123456 --docker-email=xys4k@qq.com

约束调度-给node打上标签

[root@k8s001 ~]#

[root@k8s001 ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s001 Ready master 6h2m v1.16.2 192.168.1.15 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://18.6.3

k8s002 Ready <none> 3h9m v1.16.2 192.168.1.20 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://18.6.3

k8s003 Ready <none> 3h9m v1.16.2 192.168.1.14 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://18.6.3

[root@k8s001 ~]#

[root@k8s001 ~]# kubectl label nodes k8s001 role=node1

node/k8s001 labeled

[root@k8s001 ~]# kubectl label nodes k8s002 role=node2

node/k8s002 labeled

[root@k8s001 ~]# kubectl label nodes k8s003 role=node3

node/k8s003 labeled

[root@k8s001 ~]# kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s001 Ready master 6h7m v1.16.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s001,kubernetes.io/os=linux,node-role.kubernetes.io/master=,role=node1

k8s002 Ready <none> 3h14m v1.16.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s002,kubernetes.io/os=linux,role=node2

k8s003 Ready <none> 3h14m v1.16.2 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s003,kubernetes.io/os=linux,role=node3

[root@k8s001 ~]#

部署dashboard

1 下载并部署 recommended-v2.0.1.yaml (这个文件对内容参考后文)

kubectl create -f recommended-v2.0.1.yaml

部署完毕之后 netstat -lntup | grep 30000 ,可以看到宿主机已经监听了30000端口,后面通过这个端口在浏览器访问Dashboard

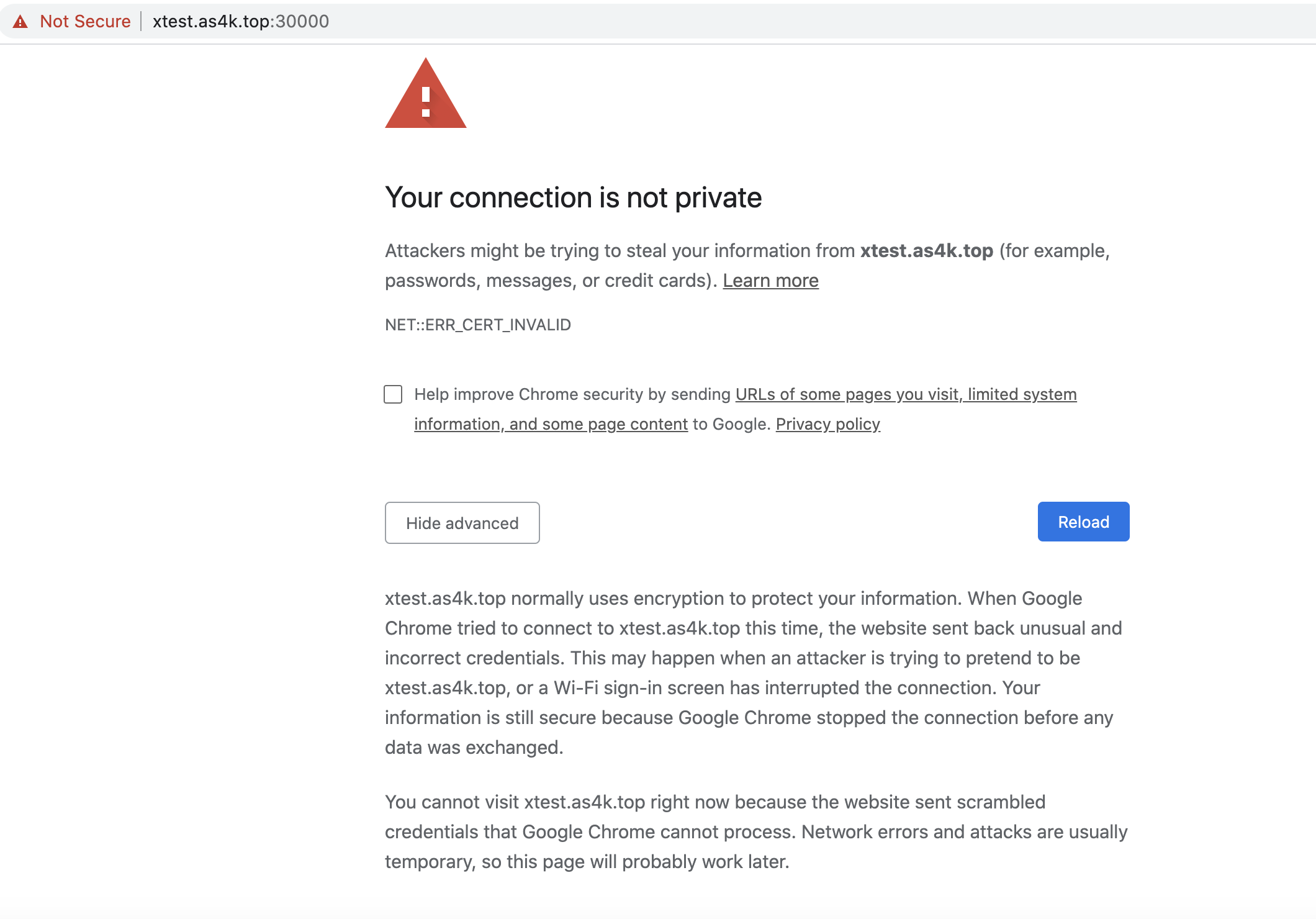

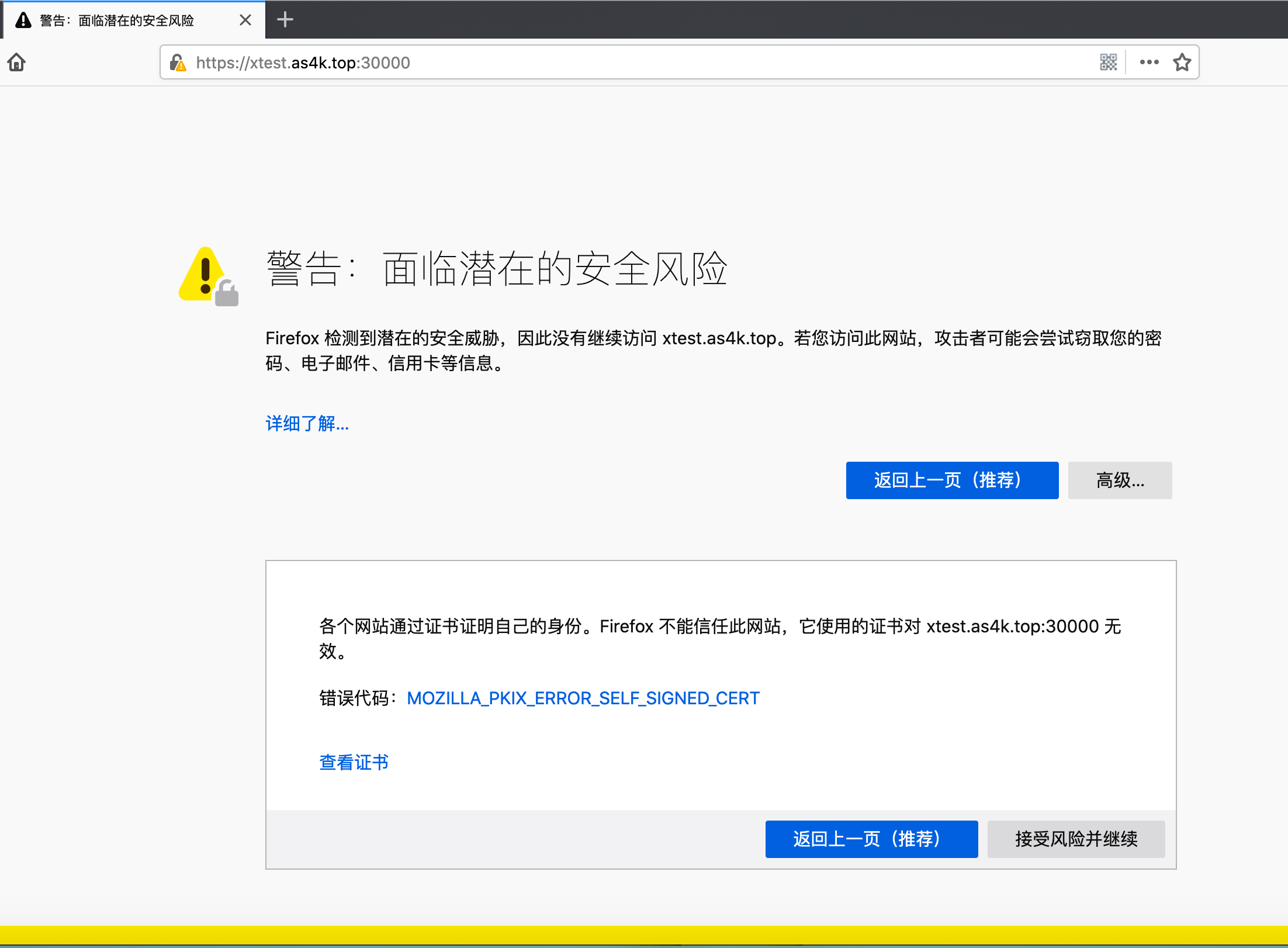

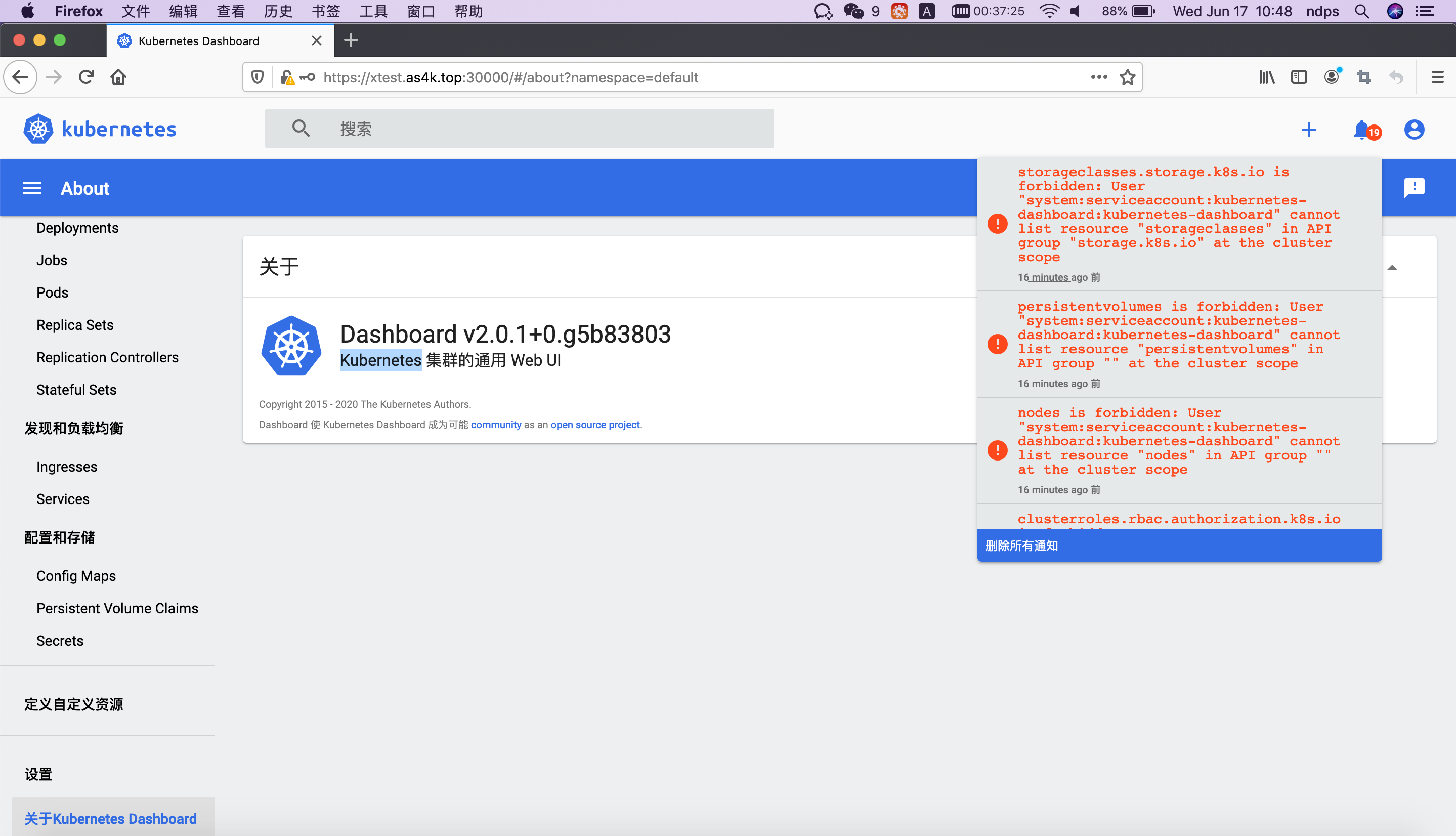

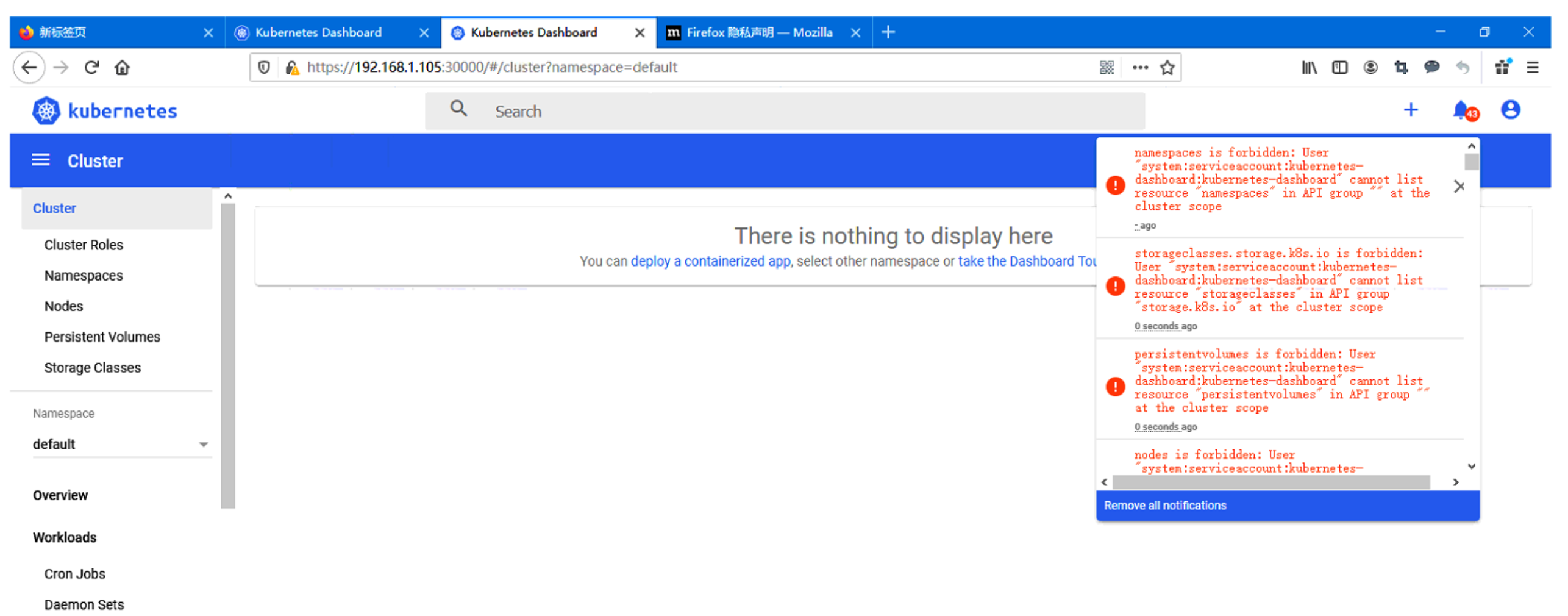

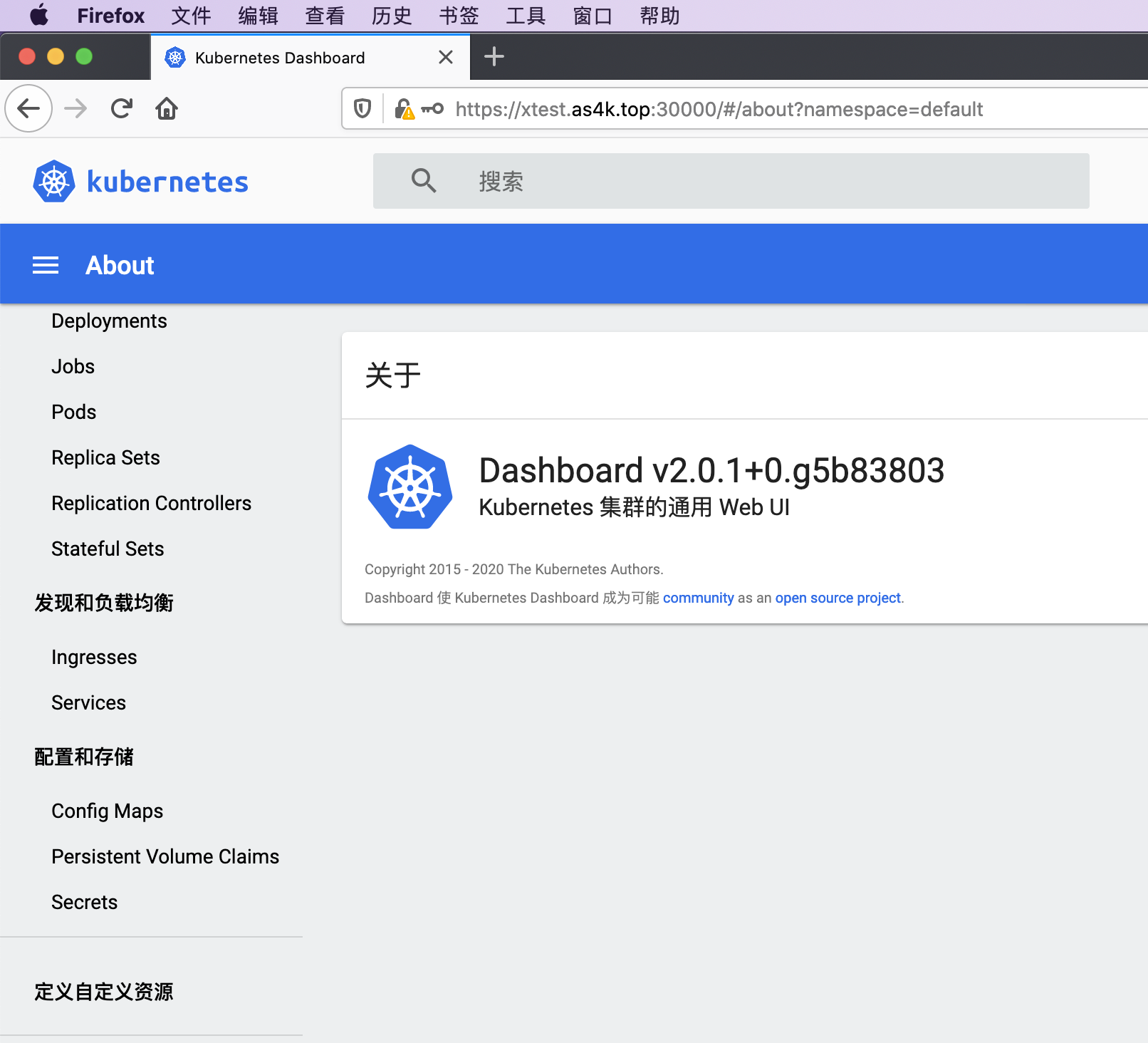

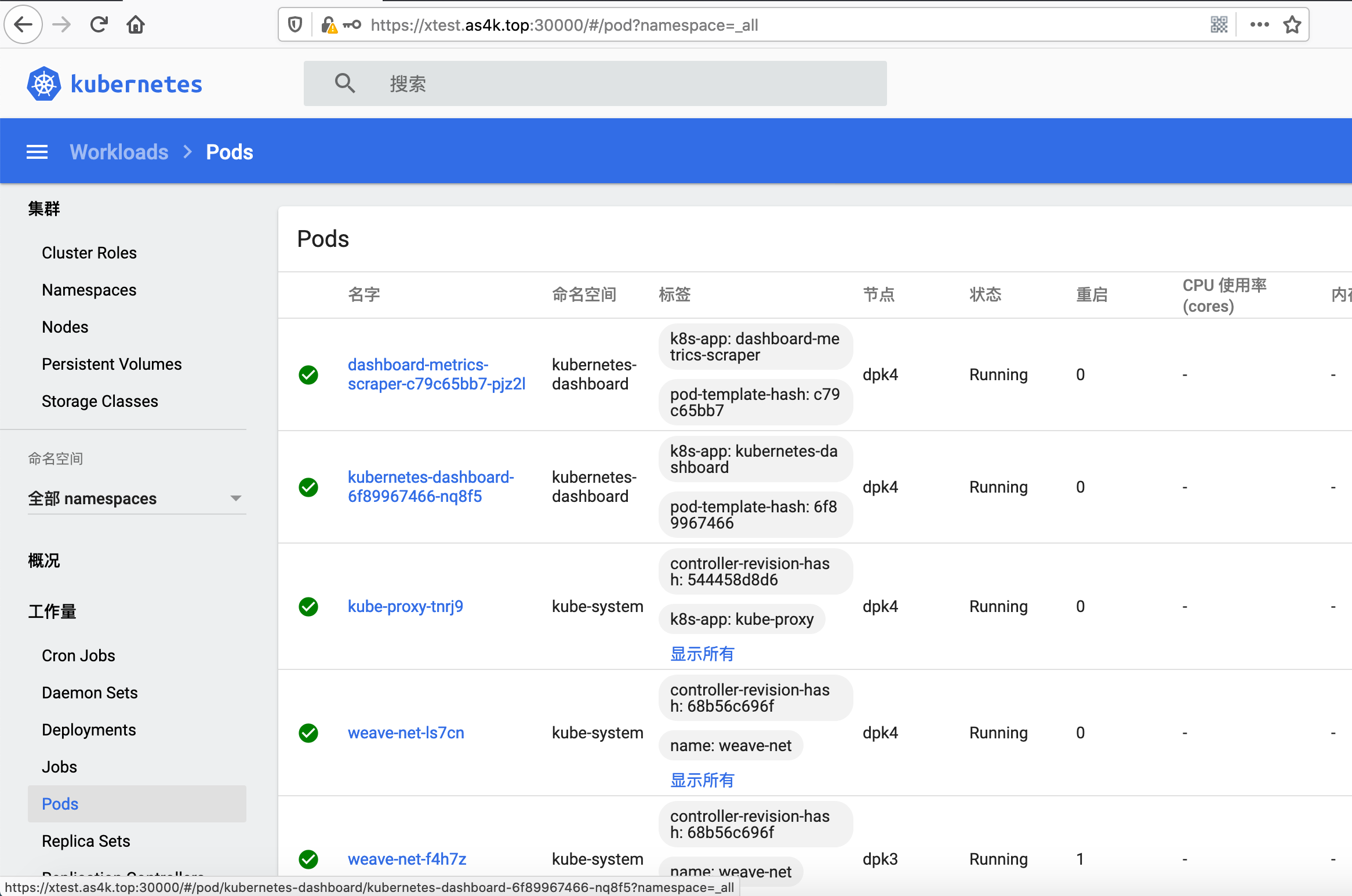

2 启用火狐浏览器(Firefox Version 77.0.1)访问 https://xtest.as4k.top:30000 ,使用其它浏览器(如谷歌),坑贼多,相关截图如下

3 使用默认token登陆,该用户的权限不高

[root@dpk1 ~]# kubectl describe secrets -n kubernetes-dashboard `kubectl get secrets -n kubernetes-dashboard | grep "kubernetes-dashboard-token" | awk '{print $1}'`

Name: kubernetes-dashboard-token-n242w

Namespace: kubernetes-dashboard

Labels: <none>

Annotations: kubernetes.io/service-account.name: kubernetes-dashboard

kubernetes.io/service-account.uid: a50a2adc-e401-42a7-a2d2-0b00bb6c6dcd

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1025 bytes

namespace: 20 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6ImJTZC1qMHBIS1R5VW9lZzJ1bnRqQ2h6M3lMTXdGcVQ2V1dFQWVxdENPeTAifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1uMjQydyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImE1MGEyYWRjLWU0MDEtNDJhNy1hMmQyLTBiMDBiYjZjNmRjZCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.aW0ms8UrAyKq0xF9E6c0mFA47oXfpI56FYbrp2X0bXPTbtaF3X567AD_Ooixn4YUefyVkvvvPAvOQ1NYN-0yCMBk-v-fK6ol-GsbipWYYyN206KX3do9tAV1aT0n2cEIGhiurM10-gt5TJXyPegGBzhjrG6IVX7LF_Q1zBV8qToJUpnPvu-u9lCow1xM5ZEBrb8EVSXYB6xLKS30Y1k5HHrOLpob_fEvMOaIEdNu4chl6kHwDfmqMuuSM8xGZQqbAJEQgZ015VBY64gVN_UFf5qNTuYBgDLRG-_ynfhdguk1IF19UPfFRJTb9Rrt9wQRPQBabjLnz3SvKKF6XFOV4w

4 使用YAML文件创建一个超级管理员用户

################################# 4.1 文件内容 ################################

[root@k8s-master01 manifests]# cat dashboard-admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

################################# 4.1 创建超级管理员用户 ################################

kubectl create -f dashboard-admin.yaml

################################# 4.3 拿到超级管理员用户对应多Token ######################

[root@dpk1 kubernetes]# kubectl describe secrets -n kube-system `kubectl get secrets --all-namespaces | grep admin-user | awk '{print $2}'`

Name: admin-user-token-7mj5j

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: 29496143-34c5-4984-aca6-22b33860840e

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1025 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6ImJTZC1qMHBIS1R5VW9lZzJ1bnRqQ2h6M3lMTXdGcVQ2V1dFQWVxdENPeTAifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLTdtajVqIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiIyOTQ5NjE0My0zNGM1LTQ5ODQtYWNhNi0yMmIzMzg2MDg0MGUiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.QSHb3cW_dEOl7-wEt7UDqC-jRUyxJqMn6txsxt6aZfY3LuMQwInGBi0oivEpsUmQRve2IdmqazASiesZuFHsJ16NI8VLfQZ_T9WiraKZ8L47bBifLtd3NA57bh46daE4n4E6o5KW6VOX1xFiAuP6VDFnTGOjRSrZ6-SFBFvQlok8NYjc6PxfqCvhO7QUaQxeY1lw3kg0OiFECtpTt9eXcaduKnJG4CRsLJ3C1zwnRihjR2NRG7viXqvtrR1iW5srRpowVAoYymPYpTOWHKXF6ciRtzHeJF4l8z4K7-BiNY6yu5APcvphX3pmER7hvMGsFPjbPNQlSSplqVmDmIU_LQ

4.5 使用上面得到的超级管理员Token登陆,此时Kubernetes Dashboard即可拥有最高权限

5 重新部署

如果操作步骤有误,需要重新部署Dashboard,可以如下操作

kubectl delete -f recommended-v2.0.1.yaml

kubectl create -f recommended-v2.0.1.yaml

使用正版公网证书nginx反向代理Dashboard

kubeadm默认安装用的自签名证书使用非火狐浏览器无法打开,用火狐浏览器打开也是各种安全警告,而如果我们配置正版公网证书,这些问题一概没有

配置K8S Dashboard证书参考

[root@dpk1 conf.d]# cat /etc/nginx/conf.d/k8s.as4k.com.conf

server {

listen 443 ssl;

server_name k8s.as4k.com;

root html;

index index.html index.htm;

ssl_certificate /etc/nginx/cert/as4k.com.crt;

ssl_certificate_key /etc/nginx/cert/as4k.com.key;

ssl_session_timeout 5m;

ssl_ciphers ECDHE-RSA-AES128-GCM-SHA256:ECDHE:ECDH:AES:HIGH:!NULL:!aNULL:!MD5:!ADH:!RC4;

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

ssl_prefer_server_ciphers on;

location / {

proxy_pass https://127.0.0.1:30000;

proxy_set_header Host $host;

proxy_connect_timeout 3600;

proxy_send_timeout 3600;

proxy_read_timeout 3600;

proxy_set_header X-Real-IP $remote_addr;

proxy_buffering off;

proxy_request_buffering off;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto http;

}

}

server {

listen 80;

server_name k8s.as4k.com;

rewrite ^(.*)$ https://$host$1 permanent;

}

recommended v2.0.1

# cat recommended-v2.0.1.yaml

# https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.1/aio/deploy/recommended.yaml

# https:xtest.as4k.top:30000

# 这里的内容和官方提供的YAML有修改,替换NodePort成为对主机端口对直接映射

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

# kind: Service

# apiVersion: v1

# metadata:

# labels:

# k8s-app: kubernetes-dashboard

# name: kubernetes-dashboard

# namespace: kubernetes-dashboard

# spec:

# ports:

# - port: 443

# targetPort: 8443

# selector:

# k8s-app: kubernetes-dashboard

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

type: NodePort

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.0.1

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.4

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

访问方式 kube-proxy

http://localhost:8001/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy/

http://k8s001:8001/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy/

kubectl proxy --port=8001 --address=0.0.0.0 --disable-filter=true

[root@k8s001 ~]# kubectl cluster-info

Kubernetes master is running at https://192.168.1.12:6443

KubeDNS is running at https://192.168.1.12:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

http://k8s001:8001/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/

http://192.168.1.105:8001/api/v1/namespaces/kubernetes-dashboard/services/http:kubernetes-dashboard:/proxy/

清空K8S部署环境

在master和node上执行

kubeadm reset

参考链接

https://github.com/kubernetes/dashboard/blob/master/docs/user/access-control/README.md

https://github.com/kubernetes/dashboard

Kubernetes的几种主流部署方式02-kubeadm部署高可用集群

https://segmentfault.com/a/1190000018741112?utm_source=tag-newest

使用 kubeadm 安装 kubernetes v1.16

https://www.kubernetes.org.cn/5846.html

kubernetes v1.14.0高可用master集群部署(使用kubeadm,离线安装)

https://www.kubernetes.org.cn/5273.html

https://github.com/kubernetes/dashboard

dashboard 证书生成

https://www.jianshu.com/p/f7ebd54ed0d1

https://github.com/kubernetes/kubernetes

http://docs.kubernetes.org.cn/

Kubernetes从零开始搭建自定义集群

http://docs.kubernetes.org.cn/774.html

kubernetes1.13.1+etcd3.3.10+flanneld0.10集群部署

https://www.kubernetes.org.cn/5025.html

随笔分类 - kubernetes

https://www.cnblogs.com/yuezhimi/category/1340864.html

Kubernetes 的证书认证

https://www.kubernetes.org.cn/2540.html

Centos7 单节点上安装kubernetes-dashboard过程

https://www.58jb.com/html/152.html

CoreDNS Manual

https://coredns.io/manual/toc/#installation

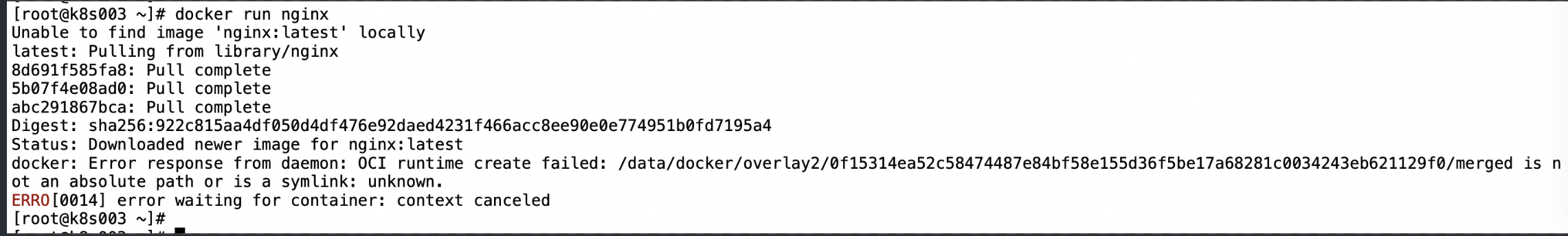

遇到的错误

docker不能安装在软链接目录里